Table of Contents

- Abstract

- Why I care about flying cat parity checks

- Coherent states as parity meters in simple terms

- Enter the flying cat

- The measurement error versus photon loss trade off

- Why three qubit checks matter for subsystem surface codes

- Resource states from repeated parity checks

- Circuit QED feasibility in numbers

- Relevance for modular fault tolerant architectures

- Questions this work raises

- Connections to related work

- My personal take away

- References

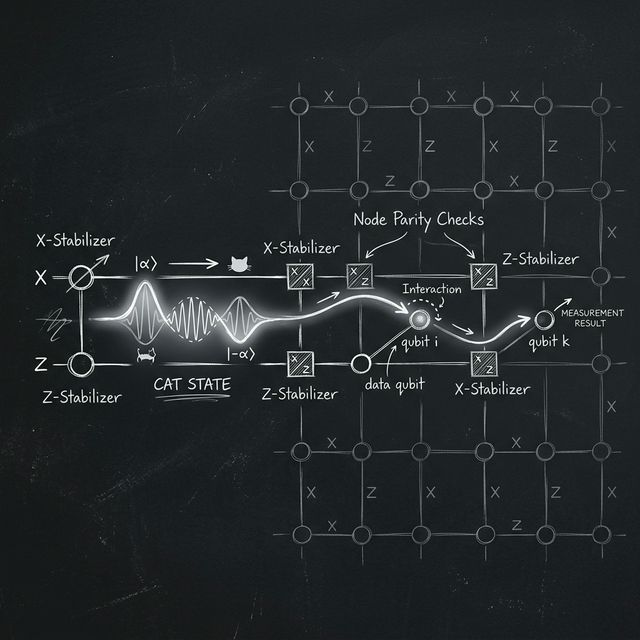

Visualizing a flying coherent state traveling across modules to execute robust, long-range parity checks.

Abstract

In this blog I walk through the main ideas of McIntyre and Coish's work on flying cat parity checks for quantum error correction, and I place it in the broader context of modular fault tolerant quantum computing.[1] I explain how propagating Schrödinger cat states can be used to perform long range multi qubit parity measurements, why there is a fundamental trade off between measurement errors and photon loss, and how the same tools generate useful multipartite resource states. Along the way I point out questions that this work naturally raises and how it connects to other proposals for modular architectures such as subsystem surface codes and noisy quantum networks.

Why I care about flying cat parity checks

Let me start in very plain language. If I want a large scale quantum computer, I either build a single huge chip with many directly coupled qubits, or I network together many smaller modules using photonic links. In the second case I still need to do quantum error correction on logical qubits that are spread across different modules, which means I need long range parity checks between distant physical qubits.

The paper by McIntyre and Coish proposes to perform these long range parity checks using flying cat states of light, that is propagating superpositions of coherent states such as

which travel through the network and interact sequentially with several stationary qubits. The central message, as I read it, is that one can design these operations to be quantum nondemolition for the cat state itself, but photon loss along the path inevitably couples measurement fidelity to back action on the data qubits, and that trade off must be optimized if one wants true fault tolerance.

Coherent states as parity meters in simple terms

I find it helpful to start with the most basic mechanism. Consider a single qubit with computational basis states \(\lvert 0\rangle\) and \(\lvert 1\rangle\), and a propagating coherent state of a cavity or transmission line mode, initially \(\lvert \alpha \rangle\). The authors consider an interaction of the form

so the qubit value flips the phase of the coherent amplitude.

Physically this can be realized in circuit QED by reflecting the pulse off a cavity whose resonance is shifted in a qubit state dependent way. If the light pulse then interacts with \(n\) qubits in sequence, each implementing that conditional phase flip, the net effect is

where \(s = \sum_{i} s_{i} \bmod 2\) is the parity of the qubits in the computational basis.

After this interaction, a phase sensitive measurement of the light field, for example by homodyne detection of the appropriate quadrature, can distinguish approximately between \(\lvert +\alpha\rangle\) and \(\lvert -\alpha\rangle\), and thus reveal the parity of the qubits. In other words, the light pulse acts as a traveling parity meter that never needs to be stored as a long lived ancilla qubit.

Enter the flying cat

Why bring cat states into the game at all if coherent states already carry parity information. The key observation is that the conditional phase shift operation is quantum nondemolition for the cat states

If the interaction is ideal and lossless, these states are eigenstates of the phase flip operator, so after the interaction they remain pure cat states up to a global phase.

In fact, I can think in two equivalent pictures. In the coherent state picture the parity information is encoded in whether the outgoing pulse is close to \(\lvert +\alpha\rangle\) or \(\lvert -\alpha\rangle\). In the cat code picture the propagating mode is a logical qubit, and the multi qubit parity check gate between code qubits and this flying logical qubit is essentially a sequence of controlled phase operations that are logical \(Z\) gates on the cat code.

By working in a regime where the separation between \(\lvert +\alpha\rangle\) and \(\lvert -\alpha\rangle\) in phase space is large, the homodyne readout of the cat phase can be almost projective, which is very attractive for high fidelity stabilizer measurements.

The measurement error versus photon loss trade off

The central technical theme of the paper is a quantitative analysis of how two error mechanisms depend on the coherent state amplitude \(\alpha\):

- Measurement errors from imperfect discrimination between \(\lvert +\alpha\rangle\) and \(\lvert -\alpha\rangle\).

- Back action errors on data qubits induced by photon loss during the flying cat interaction.

Because coherent states are not orthogonal, the probability \(p_{\mathrm{M}}\) of misidentifying the sign of \(\alpha\) decreases roughly as

where \(\bar{\alpha}\) is the attenuated amplitude after losses and \(\operatorname{erfc}\) is the complementary error function. For large \(\lvert \alpha\rvert\) the overlap between \(\lvert +\alpha\rangle\) and \(\lvert -\alpha\rangle\) is exponentially small so the measurement is very reliable.

Photon loss, however, grows with the average photon number \(\lvert \alpha\rvert^{2}\). The authors model the loss as a sequence of beam splitters with reflectivities \(\eta_{i}\) between successive qubit interactions, which leads to effective dephasing channels on certain pairs of qubits. For a three qubit parity check they show that to leading order the additional error probabilities scale as

associated with particular multi qubit \(Z\) errors that in the subsystem surface code are gauge equivalent to single qubit errors.

Putting these contributions together, they write an approximate total error probability

and show that it has a minimum at some finite optimal amplitude \(\alpha_{\star}\). So one cannot simply crank up the light intensity forever; true fault tolerance requires tuning to the sweet spot where misreadout and loss back action are balanced.

Why three qubit checks matter for subsystem surface codes

McIntyre and Coish focus on weight three parity checks for a very specific reason. The subsystem surface code construction of Bravyi, Duclos Cianci, Poulin and Suchara shows that one can realize a version of the surface code in which the native measurements are three body operators \(XXX\) and \(ZZZ\), while logical stabilizers of weight six are inferred as products of these gauge operators.

In this subsystem code:

- Physical qubits live on edges and vertices of a square lattice.

- Local gauge generators have weight three so the necessary parity checks can be done with three body measurements.

- The threshold for circuit level noise stays around the percent level, which is attractive for near term devices.

Flying cat parity checks that directly implement weight three \(X^{\otimes 3}\) and \(Z^{\otimes 3}\) measurements therefore line up very naturally with this architecture. A very nice feature of the subsystem formulation is that certain correlated two qubit errors appearing as "horizontal hook errors" in the light mediated parity checks are gauge equivalent to single qubit errors and can be treated as such by the decoder. This means the somewhat messy physical error channels induced by photon loss still fit into an effective Pauli model at the code level, which is essential for standard decoding techniques.

Resource states from repeated parity checks

I also like how the paper does not stop at error correction, but uses the same flying cat tools to build interesting entangled resource states. Two main examples appear.

Three qubit GHZ states

First, the authors show how two \(Z\) parity measurements \(Z_{1}Z_{2}\) and \(Z_{2}Z_{3}\) on three qubits initially in \(\lvert +++\rangle\) project the system into a state that can be turned into a full Greenberger Horne Zeilinger state

by a simple single qubit correction depending on the measurement outcomes. If these parity checks are implemented with flying cats, photon loss again induces additional single qubit \(Z\) errors and occasional wrong corrections, but one can quantify the resulting mixed state and even feed it into entanglement purification protocols based on Calderbank Shor Steane codes.

This is directly relevant to modular architectures in which remote ancilla qubits at different nodes are first entangled into a GHZ state, and only then coupled to data qubits to realize long range stabilizer measurements.

The six qubit tetrahedron state

The second and perhaps more novel example is a six qubit entangled state the authors call a tetrahedron state. One can associate each qubit with an edge of a tetrahedron and define six stabilizers, three of \(Z\) type and three of \(X\) type, each acting on the three edges that meet at a face or a vertex. The unique state \(\lvert T\rangle\) stabilized by all six operators can be written as

a coherent superposition over three Bell pairs that are perfectly correlated in which Bell state appears where.

If I place qubit pairs \((1,2)\), \((3,4)\) and \((5,6)\) at three distant nodes Alice, Bob and Charlie respectively, then simultaneous Bell measurements at all nodes yield the same random Bell label, giving two bits of shared randomness. The authors also sketch a protocol in which this tetrahedron state serves as the entangled channel for three party controlled teleportation of an arbitrary two qubit state, where Charlie can either enable or limit the teleportation fidelity depending on whether he cooperates.

Circuit QED feasibility in numbers

A natural question I kept asking while reading is whether these parity checks are actually practical given current superconducting hardware. McIntyre and Coish address this by looking carefully at a dispersive circuit QED setup: a qubit coupled to a single sided cavity with rate \(g\), detuned by \(\delta\) so that the cavity frequency acquires a qubit state dependent shift \(\pm\chi\) with \(\chi = g^{2}/\delta\).

They derive a reflection coefficient

where \(\kappa_{0}\) is the external coupling and \(\kappa_{\mathrm{int}}\) accounts for internal cavity loss. For a narrow band input pulse and a suitable choice of \(\chi\) one can realize approximately the unit magnitude phase flips required, but finite bandwidth and finite internal loss create deviations which the authors quantify in terms of an infidelity \(\varepsilon_{\mathrm{reflect}}\).

Using experimental parameters from previous work they estimate errors around a few percent per entangling operation and argue that with modest improvements in dispersive shift and pulse shaping, values near the percent threshold of the subsystem surface code should be reachable. They also analyze additional photon loss in transmission lines and circulators, pointing out that meter scale aluminum interconnects can have attenuation of only a few percent per kilometer, while current microwave circulators contribute order ten percent insertion loss that will likely need to be reduced.

Relevance for modular fault tolerant architectures

Let me now connect the dots to modular fault tolerant quantum computing more explicitly. A modular architecture usually means many small processor cells, each implementing a local code patch, linked by photonic channels operating at microwave or optical frequencies. Nickerson, Li and Benjamin for example proposed cells of tens of qubits linked by very noisy photonic interconnects, where entanglement is distilled into shared resource states that then enable topological stabilizer measurements across the network.

Flying cat parity checks give an alternative, more analog flavor of the same story:

- Logical data remains encoded in local surface code or subsystem surface code patches in each module.

- Long range checks of weight three are performed by sending a traveling coherent state through the relevant modules, imprinting joint parity on its phase, and reading out that phase by homodyne detection.

- The error model couples measurement reliability to photon loss in a transparent way that can be optimized and potentially folded into soft information decoders that use real valued syndrome confidence instead of simple bit outcomes.

Because the same mechanism does not require single photon sources or number resolving detectors, but only stable coherent sources and low noise quadrature measurements, it may be technologically easier to scale in the near term than fully single photon based quantum repeaters in the microwave domain. At the same time, the requirement of relatively low loss along the light path, especially in components like circulators, implies that careful engineering of the interconnect layer is crucial.

From a code design point of view, long range weight three checks are particularly appealing for emerging quantum low density parity check codes, where nonlocal check operators are needed to achieve constant rate and constant distance scaling, but geometrical connectivity on a chip is limiting. Allowing a flying ancilla to ping several distant modules in sequence relaxes those geometrical constraints.

Questions this work raises

As I read the paper from the perspective of building a future modular machine, several concrete questions kept coming up.

How to integrate soft information decoding at scale

The authors point out that their formalism actually yields the full conditional state \(\rho_{x}\) of the data qubits given a particular homodyne outcome \(x\), not just a binary parity bit, and that this soft information could be exploited by advanced decoders. I find this particularly intriguing because work on analog decoding for bosonic codes and on soft information decoders for Pauli stabilizer codes already suggests significant threshold gains.

An open question is what the best practical decoding algorithms are when each stabilizer measurement comes with a continuous valued confidence and correlated back action error probabilities derived from the flying cat model. For large scale LDPC codes with many long range checks, designing decoders that can ingest this richer syndrome information without exploding in complexity is a worthwhile research direction.

Compatibility with dynamical decoupling and fast gates

Realistic qubit memories in circuit QED or spin based devices are likely to rely on dynamical decoupling sequences and fast single and two qubit gates in between stabilizer cycles. The authors themselves point out that fast qubit operations can emit additional light into the cavity or transmission line and thus leak information during the parity check in a complicated manner.

I would like to understand quantitatively to what extent flying cat parity checks can coexist with continuous decoupling, or whether one must time multiplex the code operation into intervals where qubits are coupled to the cavity for parity extraction and intervals where they are dynamically decoupled from it. This interplay between control pulses and bosonic probes is subtle and seems wide open.

More elaborate encodings of the flying ancilla

In the paper only the simplest single mode cat encoding for the flying ancilla is considered, although the authors hint that more complex encodings might allow simultaneous detection of photon loss and parity, for example through cat code variants that protect against loss. Designing multimode or multicomponent cat codes tailored to light mediated parity checks, perhaps in combination with time bin or frequency bin encodings, could reduce back action even further or allow for loss tolerant measurement of higher weight stabilizers.

A related question is whether adaptive measurement schemes where the homodyne basis or displacement is adjusted based on earlier outcomes can improve the trade off between measurement error and back action beyond what a single nonadaptive homodyne setting allows.

Interplay with other modular schemes

There is a rich landscape of proposals for modular fault tolerant architectures, including the noisy network protocols of Nickerson et al. based on entanglement purification and toric codes, as well as more recent work on cavity based quantum networks and long range couplers. Flying cat parity checks offer a third axis in this design space where ancillae are bright coherent fields rather than single photons or static qubits.

I find it natural to ask whether one can hybridize these approaches. For instance, could one use flying cats to create initial noisy multi qubit resource states, then purify them using more standard discrete entanglement purification protocols, and then consume the purified states in conventional ancilla based stabilizer measurements. This hybrid strategy might be more robust in regimes of very high transmission loss where direct flying cat parity checks on data qubits would be too damaging.

Connections to related work

Let me close by explicitly situating this paper among a few key related works that I find particularly relevant.

Subsystem surface codes

The subsystem surface code of Bravyi, Duclos Cianci, Poulin and Suchara [2] shows that replacing weight four checks by weight three gauge operators can preserve high thresholds while simplifying measurements, and it provides the main code theoretic motivation for focusing on three qubit parity checks here. McIntyre and Coish essentially design a physical mechanism that directly realizes the kind of three body checks that this code family wants, including an analysis of how its physical noise propagates into the gauge structure.

Noisy modular networks

Nickerson, Li and Benjamin [3] advocated for a modular design with small cells connected by very noisy photonic links and showed how repeated purification of inter cell entanglement could still enable surface code stabilizers across the network if intra cell errors are below about one percent. Flying cat parity checks can be seen as an alternative module to perform those long range checks directly, with coherent state probes in place of single photon links, and it will be interesting to compare thresholds and overheads between these two paradigms in the same error models.

Circuit QED and cat codes

The work also builds on the mature field of circuit QED and the use of cat states as bosonic codes for amplitude damping errors, as reviewed for example by Blais and coauthors [4] and by various cat code proposals. The novelty here is that the cat lives in a traveling mode instead of a stationary cavity, which shifts the focus from storage errors to loss during propagation, but many of the same techniques for cat state generation and homodyne detection carry over.

Quantum LDPC codes and long range checks

There is an active line of research on quantum low density parity check codes that achieve better asymptotic parameters than the surface code but require nonlocal check operators that are hard to realize with only nearest neighbor interactions [5]. Flying cat mediated parity checks provide a physically motivated way to realize such nonlocal checks in an architecture that is otherwise local within modules, which might make some of these LDPC constructions more realistic for hardware proposals.

My personal take away

Personally, what I like most about this work is that it starts from a very concrete physical ingredient, coherent state reflections in circuit QED, and systematically pushes it toward a full error model for a realistic stabilizer measurement in a modular architecture. The quantitative trade off between measurement misidentification and loss induced back action, with an explicit optimal point for the coherent state amplitude, gives me a useful design knob to think about in any future implementation.

At the same time, the paper raises more questions than it answers about global architecture, decoding strategies, and compatibility with other control techniques. For me that is a good sign, because it means flying cat parity checks are not just a clever trick, but a new primitive that may change how we design modular, fault tolerant quantum processors in the years to come.

References

Here I list only a short set of key references that I find most central to the discussion.

- Z. M. McIntyre and W. A. Coish, "Flying cat parity checks for quantum error correction", Phys. Rev. Research 6, 023247 (2024).

- S. Bravyi, G. Duclos Cianci, D. Poulin, and M. Suchara, "Subsystem surface codes with three qubit check operators", Quantum Information and Computation 13, 963–985 (2013).

- N. H. Nickerson, Y. Li, and S. C. Benjamin, "Topological quantum computing with a very noisy network and local error rates approaching one percent", Nature Communications 4, 1756 (2013).

- A. Blais, A. L. Grimsmo, S. M. Girvin, and A. Wallraff, "Circuit quantum electrodynamics", Reviews of Modern Physics 93, 025005 (2021), and references therein for circuit QED implementations.

- L. Z. Cohen, I. H. Kim, S. D. Bartlett, and B. J. Brown, "Low overhead fault tolerant quantum computing using long range connectivity", Science Advances 8, eabn1717 (2022).