Table of Contents

- Introduction

- From static to dynamical codes

- Definition of a dynamical code

- Instantaneous stabilizer groups and measurement schedules

- Protection and masked distance

- Encoding and logical gates

- Which codes are dynamical

- How to decide if a code is dynamical

- Realizing dynamical codes in hardware

- From static codes to dynamical codes

- Approximate dynamical codes

- How dynamical codes relate to other coding paradigms

- Why I find dynamical codes exciting

- References

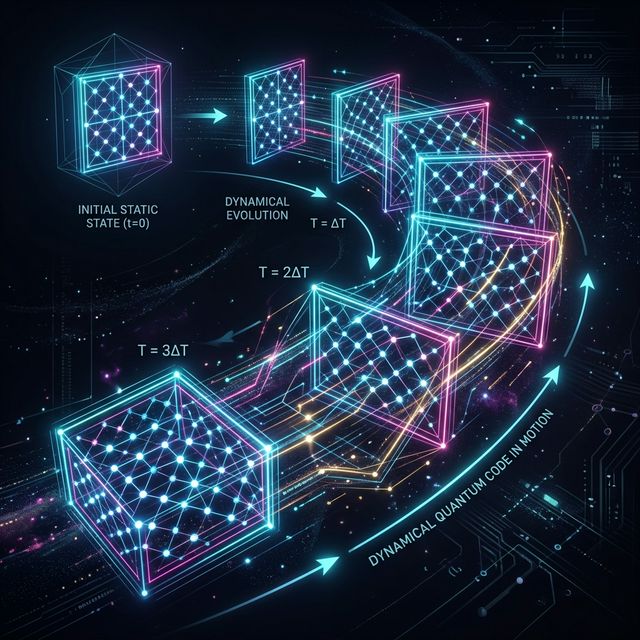

Visualizing the spacetime dynamics of a quantum code ferrying logical information through intermediate stabilizer states.

Introduction

When I first learned about quantum error correction, I imagined codes as static objects that quietly sit on a lattice while we measure the same set of checks over and over. Dynamical codes challenge this picture by weaving the code itself through time using a carefully designed sequence of measurements.

In this blog post I walk through what a dynamical code is, why it is useful, which concrete codes live in this family, and how one actually decides whether a given construction counts as a dynamical code. I will keep the discussion informal and example driven while still pointing to precise definitions from the research literature.

From static to dynamical codes

Most standard quantum error correcting codes, such as the surface code or the color code, are defined by a fixed stabilizer group. At any time the code space is the joint plus one eigenspace of a static set of stabilizer generators, and repeated stabilizer measurements simply project the state back into this subspace while revealing error syndromes.

In a dynamical code the stabilizers that define the code space are allowed to change in time according to a prescribed sequence of local measurements. The code space at a given round is still a stabilizer code, but the underlying stabilizer group may be different at the next round because we deliberately measure a new pattern of check operators.

One way to think about this is that a static code uses space to store redundancy, while a dynamical code also exploits the time direction. Logical information is ferried through a sequence of intermediate stabilizer codes, which together implement initialization, error detection and logical gates.

Definition of a dynamical code

The Error Correction Zoo gives a concise working definition that I find very helpful. A dynamical code is a stabilizer based quantum code defined by a (not necessarily periodic) sequence of few body measurements such that this sequence implements state initialization, logical gates and error detection.

More formally, after each measurement step the system is in the joint plus one eigenspace of an instantaneous stabilizer group which I denote by \( \mathsf{S}_{t} \). The next set of measurements is drawn from a group of check operators \( \mathsf{F} \), and these measurements map \( \mathsf{S}_{t} \) to a new instantaneous stabilizer group \( \mathsf{S}_{t+1} \), a process often called code switching.

At every integer time \( t \) the codewords form an ordinary stabilizer code with parameters \( [[n,k,d(t)]] \), but the global error correcting power of the full protocol depends on the entire history of measurements, not just on any single snapshot. This temporal aspect is what makes defining quantities such as the distance of a dynamical code both subtle and interesting.

Instantaneous stabilizer groups and measurement schedules

To build intuition I like to picture a dynamical code as a movie made of static codes. Each frame in the movie shows a stabilizer code defined by an instantaneous stabilizer group, and neighbouring frames are related by a local measurement update rule.

The check operators in the measurement schedule are usually few body Pauli operators, often of weight two or three, that can be implemented with local interactions on a lattice. A complete schedule might be periodic, as in many Floquet codes, or aperiodic when we want to realize particular logical gates through a tailored sequence.

A key difference from subsystem codes is that not every sequence of legal local checks preserves the logical information. Only specific sequences qualify as valid dynamical code protocols, and some measurement orders may even irreversibly lose parts of the syndrome information.

Protection and masked distance

Because stabilizers can be temporarily hidden by the dynamics, protection in a dynamical code is not characterized only by the usual static code distance. Instead, one tracks how much syndrome information about different stabilizers is actually available at each step of the measurement protocol.

Fu and Gottesman [3] introduced an efficient classical algorithm that, given a measurement schedule, classifies each stabilizer into one of three types.

- An unmasked stabilizer is one whose eigenvalue can in principle be recovered from the measurement record, possibly as a product of several measured checks.

- A temporarily masked stabilizer is one whose eigenvalue is not yet known but could be inferred by future measurements in the schedule.

- A permanently masked stabilizer is one whose eigenvalue can never be reconstructed because the information was irrevocably lost by past measurements.

Given the set \( U \) of masked stabilizers, one defines a masked distance

where \( \mathsf{N}(U) \) is the normalizer of \( U \) and \( \mathsf{G} \) is a gauge group determined by the algorithm and by the measurement schedule. This parameter quantifies how well the code protects against errors that act nontrivially on the still masked stabilizers.

In practice the masked distance can be smaller or larger than the distance of any instantaneous stabilizer code along the trajectory, so it is a genuinely dynamical notion rather than a simple minimum or maximum over time. For me this illustrates how the temporal structure really becomes part of the code.

Encoding and logical gates

One of the appealing aspects of dynamical codes is that encoding and gate implementation are just particular choices of measurement schedules. In a code with \( r \) stabilizer generators, a suitable schedule can initialize the code space in at most \( r \) measurement cycles, which is essentially optimal.

Dynamic automorphism color codes, often abbreviated DA color codes [2], provide an elegant example. A stack of triangular patches of the two dimensional DA color code can encode \( N \) logical qubits, and by performing tailored two and three qubit measurements between the patches one can implement the full Clifford group of logical gates.

In three dimensions a related DA color code variant supports a non Clifford logical gate using adaptive two qubit measurements. This shows that dynamical schedules can realize gates beyond the Clifford group while remaining local in space and time.

Which codes are dynamical

The dynamical code entry in the Error Correction Zoo sits high in a small hierarchy. It has quantum domain and qubit code as ancestors, and it is specifically the parent for several families of Floquet codes and DA color codes.

The historically first explicit example is the Hastings Haah Floquet code [1]. This is a two dimensional dynamical code whose measurement schedule is strictly periodic, so it is sometimes described as the prototypical periodic Floquet code.

Beyond that prototype, a whole zoo of Floquet codes are explicitly identified as dynamical. The Floquet list in the Error Correction Zoo includes honeycomb Floquet codes, ladder Floquet codes, hyperbolic Floquet codes, and several three dimensional Floquet codes, all of which are built from periodic sequences of local Pauli measurements on suitable lattices.

For example, the honeycomb Floquet code lives on a three valent three colorable lattice where different edge colors correspond to different Pauli checks that are cycled in time. Logical qubits appear and move as we run through the measurement cycle, and the code enjoys automatic Clifford gate processing because of these dynamics.

Another striking example is the X cube Floquet code. Here a periodic schedule of two qubit measurements on a three dimensional lattice interpolates between layers of X cube fracton order and stacks of entangled two dimensional toric codes, and the number of logical qubits grows with system size. This construction again fits perfectly into the dynamical code framework because the logical information is defined by the full spacetime measurement pattern rather than by any single static stabilizer group.

Recent work has also introduced Floquet codes tailored for biased noise, such as the X\(^{3}\)Z\(^{3}\) Floquet code. In this design the measurement schedule is optimized so that decoding reduces to a simplified problem that takes advantage of the underlying symmetry of the dynamics, leading to improved thresholds under certain noise models.

How to decide if a code is dynamical

Given a new proposal for a quantum code, how can I tell whether it belongs in the dynamical class. In practice I check a small list of criteria that distills the definition used in the theory papers and in the Error Correction Zoo entry.

Stabilizer based description

First, there must be a stabilizer based description at each time step. That is, after every measurement round the valid code states should form the plus one eigenspace of some stabilizer group, possibly with gauge degrees of freedom if we reinterpret the construction as a subsystem code.

This excludes more exotic schemes where the code space is only approximately preserved or where the dynamics are not captured by stabilizer formalism. Approximate dynamical codes do exist, but in that case one explicitly relaxes the exact error correction conditions while still tracking the time dependent structure.

Specified measurement schedule

Second, the code is defined by a concrete sequence of measurements drawn from a fixed set of check operators. The sequence can be periodic, quasi periodic or even finite, but it must be part of the code specification itself, not just an arbitrary choice of stabilizers we happen to measure.

This is where dynamical codes differ from monitored random circuit codes. In random circuit codes one still has an instantaneous stabilizer group that evolves through gates and measurements, but any allowed measurement outcome and choice is part of the ensemble, while a dynamical code prescribes a particular schedule that realizes initialization, gates and error detection.

Logical operations from dynamics

Third, the protocol should use the dynamics to implement nontrivial logical operations. For DA color codes, for instance, the motion of domain walls induced by the measurement sequence realizes Clifford gates and certain non Clifford gates in a very geometric way.

Even when the logical gate set is not fully optimized, researchers usually analyze which logical operators are supported and how they transform over a full measurement cycle. If the only effect of the time dependence is to reproduce a static code with no additional benefits, the construction is better viewed as a complicated way to realize a static stabilizer code.

Error detection along the trajectory

Finally, the full schedule must admit a consistent decoding procedure that uses the whole history of measurement outcomes. The Fu and Gottesman framework does this by tracking masked stabilizers and computing the effective distance as the measurement record accumulates.

In concrete proposals one typically simulates the protocol under a circuit level noise model and studies logical error rates and thresholds using decoders that explicitly incorporate the time dependent structure. The success of such decoders is strong evidence that the construction really behaves as a dynamical code and not just as a sequence of unrelated static checks.

Realizing dynamical codes in hardware

On the hardware side dynamical codes are attractive because they can reduce the locality and weight of check measurements compared with static topological codes of similar performance. Many Floquet codes use only weight two Pauli measurements on degree three lattices, a connectivity pattern that is friendly to several leading architectures.

Experimental and architectural studies suggest that dynamical codes are promising for platforms with native two qubit parity measurements and noisy single shot readout. By carefully choosing the schedule one can trade additional rounds of measurements in time for simpler connectivity in space and sometimes for higher thresholds in biased noise regimes.

Majorana based qubit designs offer another natural home for dynamical codes. In these systems one can turn on and off local couplings between Majoranas in a pattern that effectively enacts the required measurement schedule, and recent work has evaluated the performance of planar Floquet codes implemented in such architectures.

From static codes to dynamical codes

One of my favourite conceptual messages from recent work is that dynamical codes are not an isolated species. In fact, any qubit stabilizer code with bounded weight generators can be converted into a dynamical code through suitable measurement gadgets, a process sometimes called Floquetification.

Rodatz, Poör and Kissinger [5] showed that using ZX calculus one can transform an \( [[n,k,d]] \) qubit stabilizer code with generator weight at most \( m \) into an \( [[n+\lceil m/2 \rceil + \ell, k, d']] \) dynamical code built only from single and two qubit operations, where \( \ell \leq \log_{2} m \) and the new distance obeys \( d' \geq d \). This transformation can often be applied while keeping the interactions local in a low dimensional layout, which is important for practical fault tolerance.

More generally, Xu and Dua developed a spacetime concatenation framework. Here one views the dynamical code as the result of concatenating small spatial gadgets for each qubit with temporal gadgets that couple different time slices, under conditions that preserve locality, fault tolerance and spacetime distance.

These results convince me that dynamical codes should be regarded less as exotic outliers and more as a flexible way to package familiar stabilizer codes into protocols that better match hardware constraints.

Approximate dynamical codes

So far I have focused on exact error correction, where logical information is perfectly protected below a certain distance or threshold. Approximate dynamical codes relax this requirement and only demand that logical noise be suppressed up to a specified tolerance.

Basak and collaborators [4] constructed several approximate dynamical codes within the strategic code framework and analyzed them using semidefinite programming techniques. They also introduced a temporal version of the Petz recovery map that is specifically tailored to the time dependent structure of these codes.

Approximate schemes are particularly attractive in near term settings where we may not have enough qubits or coherence time to reach fully fault tolerant regimes but can already gain significant robustness against dominant noise processes. In my view, combining approximate quantum error correction with dynamical schedules is a promising direction for practical applications.

Why I find dynamical codes exciting

To close, I want to share why I personally find dynamical codes so compelling. First, they encourage me to think of error correction as a spacetime object, not just as a static subspace or a stabilizer table.

Second, they seem to match hardware realities surprisingly well. Many near term platforms provide simple local interactions and repetitive measurements more naturally than they provide large weight multi qubit checks, and dynamical codes are tailor made to exploit exactly that regime.

Third, the theory is still developing rapidly. New notions like masked distance, spacetime concatenation and approximate dynamical recovery maps give us a refined language to talk about protection in the time direction, with direct implications for how we design decoders and benchmarks.

If you like thinking about quantum error correction as an interplay between topology, algebra and dynamics, then dynamical codes offer a very rich playground that is only starting to be mapped out. I hope this post helped sketch the landscape and pointed you to some of the key constructions and ideas.

References

- M. B. Hastings and J. Haah, "Dynamically Generated Logical Qubits," Quantum 5, 564 (2021), arXiv:2107.02194.

- M. Davydova, N. Tantivasadakarn, S. Balasubramanian, and D. Aasen, "Quantum computation from dynamic automorphism codes," Quantum 8, 1448 (2024), arXiv:2307.10353.

- E. X. Fu and D. Gottesman, "Error Correction in Dynamical Codes," Quantum 9, 1886 (2025), arXiv:2403.04163.

- N. Basak, A. Tanggara, A. Mohan, G. Paul, and K. Bharti, "Approximate Dynamical Quantum Error Correcting Codes," arXiv:2502.09177 (2025).

- B. Rodatz, B. Poör, and A. Kissinger, "Floquetifying stabiliser codes with distance preserving rewrites," arXiv:2410.17240 (2024).